Cluster Configuration Sync¶

Note

Please note, cluster sync is still considered as experimental. If you have any issues or comments regarding this module please send e-mail to support@diladele.com.

Web Safety is able to automatically sync configuration settings between designated master node and any number of cluster nodes.

To enable configuration sync:

Upload the same Root CA certificate to all nodes of the cluster. Connections from slave nodes to master node are done using HTTPS protocol with mutual authentication of master and slave nodes using Root CA’s private key and certificate. Thus to succeed the Root CA must be the same on all nodes.

Choose one node as master node. In future all changes of the web filter configuration should be done using this node. Slave nodes will automatically get their configuration from master node.

Configure master node web filtering policies and Squid proxy settings as desired using Web UI.

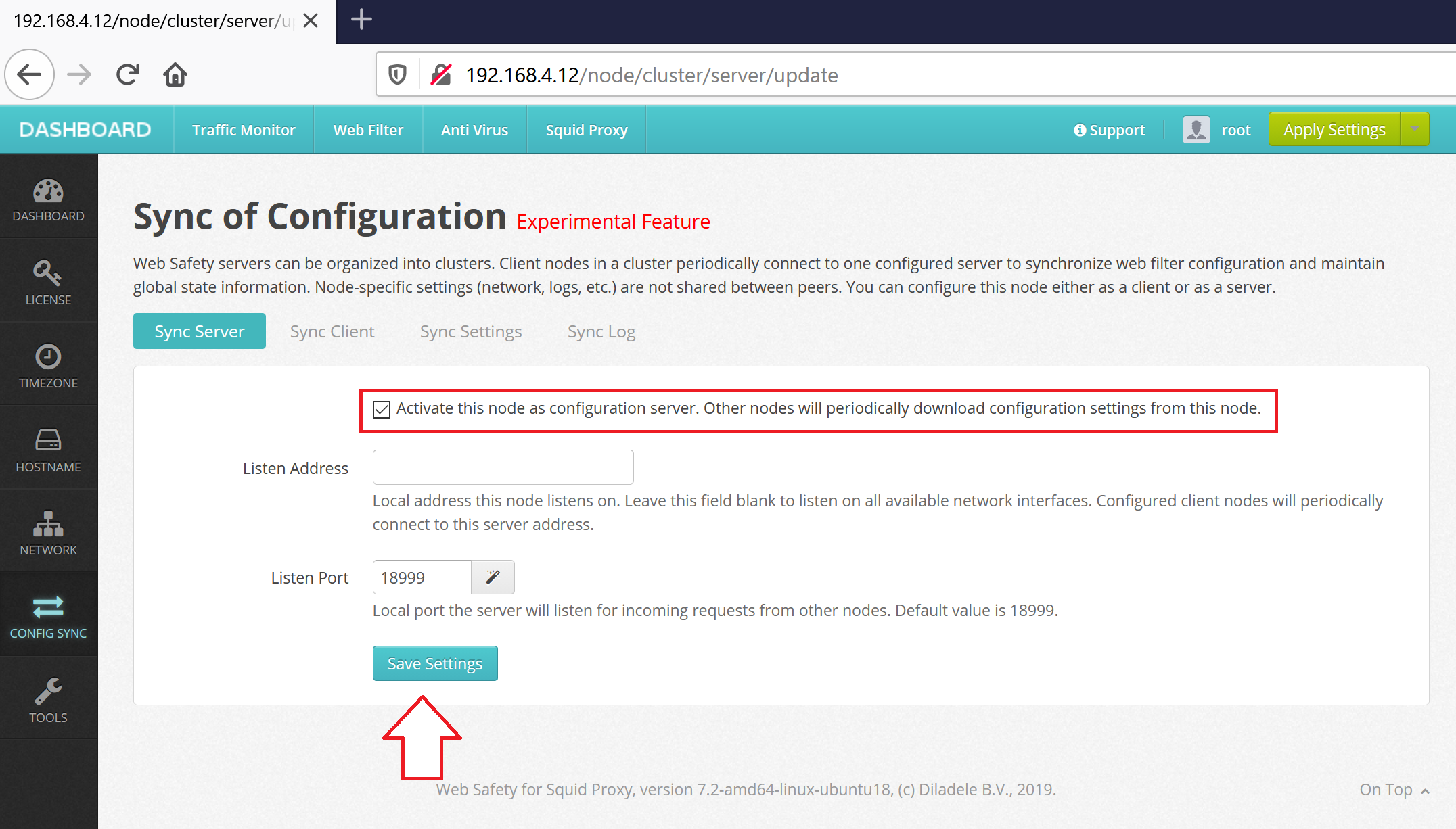

Configure master node as configuration server. This can be done in Web UI / Dashboard / Config Sync as indicated on the following screenshot. Click Save and Restart.

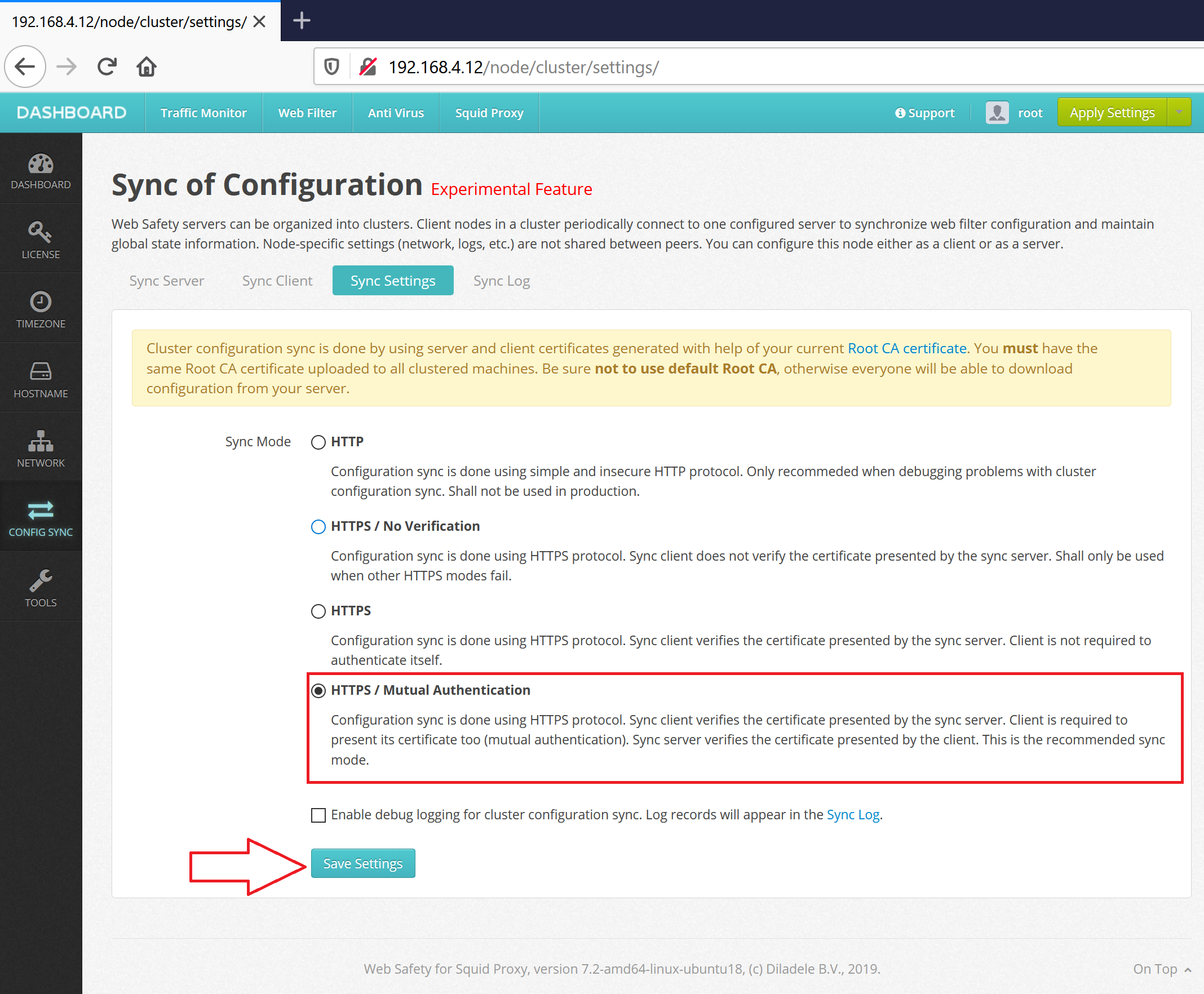

Select type of configuration sync according to the following screenshot. The recommended sync type is HTTPS with mutual authentication of server and client.

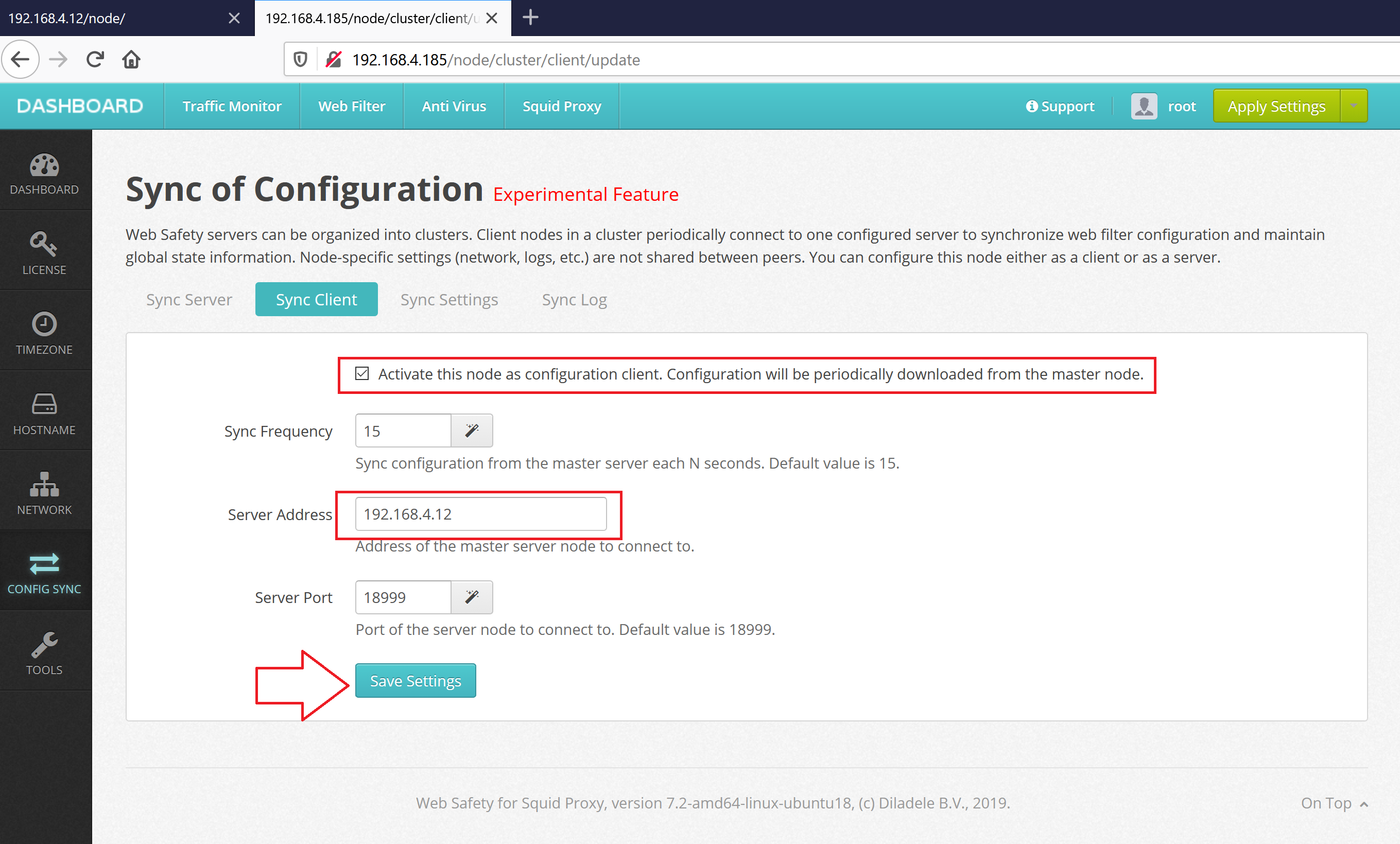

Configure any number of slave nodes as configuration clients. This can be done in Web UI / Dashboard / Config Sync as indicated on the following screenshot. Click Save and Restart. Do not forget to put the IP address of master server and select the same sync type as on the server.

From now on slave nodes will automatically download configuration from master node. All services on the client will be automatically restarted by running the /opt/websafety/bin/cluster.sh script. Log of restart will be stored in /opt/websafety/var/log/cluster_sh.log and sync log will be shown in the Web UI.

Note

Please note, all nodes in the cluster MUST have the same version of Web Safety installed. All nodes in the cluster MUST run on the same operating system.

Note

By default, cluster sync is done using port 18999. If you are using firewall on Squid nodes it might be needed to add the following iptables rules on all cluster nodes (here, ens160 is the NIC name used in virtual appliance, yours might be different of course).

-A INPUT -i ens160 -p tcp --dport 18999 -j ACCEPT